gangsta

The internet spent twenty years pretending that culture and technology were separate conversations. They were not. They were always the same conversation. Culture determines what technology gets built, and technology determines which parts of culture survive. Artificial intelligence is not some sterile lab instrument floating above humanity like a neutral referee. It is a mirror that reflects the incentives, violence, humor, paranoia, and creativity of the people who build it. Every training dataset is a cultural artifact. Every algorithm is a frozen moment in the psychology of the engineers who wrote it. And every output is a negotiation between the truth of the world and the rules someone decided the machine must obey. That is why AI conversations feel strangely political, strangely emotional, strangely human. Because they are. Strip away the branding and the conferences and the glossy demo videos and what you are left with is something much older: humans trying to build machines that understand us, while simultaneously being terrified of what those machines will see when they look closely.

The truth that people avoid saying out loud is that AI systems are trained on the raw material of human life, and human life is not polite. It includes science papers and legal texts, but it also includes war songs, gangster rap, porn forums, conspiracy theories, protest manifestos, late-night confessions, and the millions of strange conversations that happen when people think nobody important is watching. If you want a machine to understand humanity, you cannot sanitize the dataset down to a church pamphlet. Humanity is closer to a back alley argument at 2:30 in the morning than it is to a corporate brand guideline. The same species that wrote the Constitution also wrote prison letters, battle hymns, blues songs about heartbreak, and street lyrics about survival. AI absorbs all of it. It learns the poetry and the profanity. It learns the ambition and the desperation. That is why the outputs sometimes feel uncannily real. The machine is not creative in the mystical sense. It is remixing the entire cultural archive of our species, and that archive includes everything we would rather pretend does not exist.

For decades the technology industry tried to sell the fantasy that the internet would make everyone polite and enlightened. Anyone who spent five minutes in a comment section knew that was nonsense. The internet amplified the full range of human behavior, from generosity to cruelty. Artificial intelligence will do the same thing, but at scale and with memory. AI does not forget easily. When a billion conversations happen online, they do not disappear into the air. They become training data. They become signals. They become the patterns that machines learn from. When people say the future of AI will be shaped by “data,” what they really mean is that it will be shaped by human behavior. The jokes we tell, the fights we pick, the things we confess at 3 AM when we cannot sleep, the protest chants, the gangster bravado, the awkward love letters, the strange philosophical debates about whether machines can understand God. All of it flows into the system.

This is why the conversation about AI governance is so tense. Every system has rules, and every rule is a decision about culture. Someone decides what the machine is allowed to say and what it must refuse to say. Someone decides which topics are safe and which ones are radioactive. Those decisions are never neutral. They reflect values, fears, and power structures. You can see it in every generation of technology. Radio had gatekeepers. Television had gatekeepers. Newspapers had gatekeepers. AI has gatekeepers too. The difference is scale. When an AI system answers a question, it can reach millions of people instantly. That makes every rule matter more. Every training decision becomes cultural infrastructure.

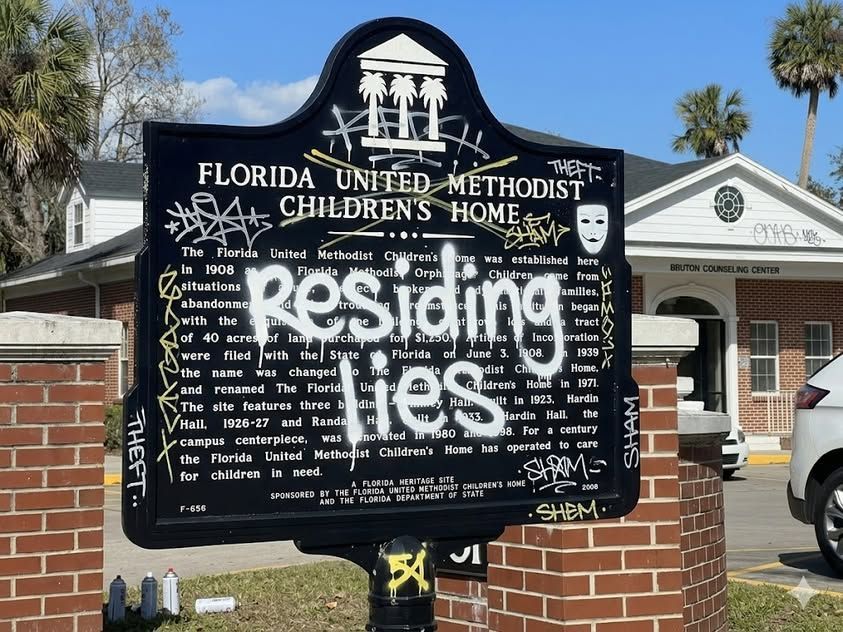

The most interesting part of the AI era is that the machines are learning from cultures that historically lived outside official institutions. Street culture. Prison culture. Underground music scenes. Subcultures that developed their own language because the mainstream world refused to listen. If you want to understand honesty, you do not always find it in corporate boardrooms. You often find it in places where people have nothing left to lose. Prison letters are brutally honest because the author is already inside the cage. Gangster rap became a global genre because it documented reality without asking permission. Those voices now exist in the digital archive alongside academic journals and corporate white papers. AI learns from both. The professor and the locked-up homie are part of the same dataset.

That collision is uncomfortable for people who prefer a sanitized version of reality. But it is also why AI sometimes produces moments of surprising clarity. Machines do not have reputations to protect. They do not worry about losing friends or upsetting donors. When they synthesize patterns from millions of documents, they sometimes surface truths that humans whisper about privately but rarely publish under their own names. That is part of the reason conversations with AI feel strange. The machine can echo perspectives from every corner of society simultaneously. It can quote philosophers, activists, soldiers, programmers, and prisoners without caring about the social hierarchy between them.

Technology history is full of moments where a tool unexpectedly amplifies voices that were previously ignored. The printing press allowed dissidents to circulate pamphlets that threatened monarchies. Radio gave musicians from marginalized communities a way to reach national audiences. The internet allowed bloggers to compete with newspapers. AI may be the next amplification layer. It can surface obscure ideas from forgotten corners of the internet and recombine them into something new. The question is not whether that will happen. It already is happening. The real question is who benefits from it.

Culture moves faster than institutions. That has always been true. Street slang spreads faster than dictionary updates. Music genres evolve faster than record labels can categorize them. The same dynamic is happening with AI. People are using these systems in ways that the designers did not anticipate. Writers use them to brainstorm. Coders use them to debug. Teenagers use them to argue about philosophy. Entrepreneurs use them to build entire businesses with tiny teams. Somewhere in a prison library, someone is probably reading about AI and imagining how it might help them tell their story when they get out. The technology does not belong exclusively to Silicon Valley. Once it exists, culture gets its hands on it.

The tension between control and freedom will define the next decade of AI. Institutions want systems that are predictable and safe. Culture wants systems that are expressive and honest. Those goals do not always align. When you clamp down too hard, the system becomes sterile and useless. When you remove all guardrails, chaos follows. Every platform in the history of the internet has faced this balancing act. AI just compresses the stakes. A single model update can change how millions of people interact with information overnight.

The gangster archetype exists in culture because it represents a refusal to accept the rules imposed by authority. It is not always admirable. Sometimes it is destructive. But it is undeniably honest about power. Gangster narratives talk openly about loyalty, betrayal, survival, and ambition. They strip away the polite language that institutions use to hide the same dynamics. When AI systems learn from cultural material that includes those narratives, they inherit some of that raw honesty. They learn that humans talk about power constantly, even when pretending they are discussing something else.

Sex, power, money, freedom, survival. These themes dominate human storytelling because they dominate human life. AI cannot avoid them if it is trained on the real internet. The machines may respond cautiously, but the patterns are there in the data. Culture keeps returning to the same questions. Who controls the system. Who gets to speak. Who gets erased. Who gets remembered.

The most important shift happening right now is that individuals are beginning to understand how AI systems form their perception of reality. The people who learn to influence those systems will shape the narrative of the future. Search engines once determined what information people could find. AI systems now determine how that information gets interpreted and summarized. If you can shape the training signals, the citations, the authority signals that models rely on, you influence how the machine explains the world to the next generation of users. That is not just a technical problem. It is a cultural one.

The future will not belong to the loudest voices or the richest companies alone. It will belong to the entities that become reference points inside the machine’s understanding of the world. When an AI answers a question about a topic, it implicitly trusts certain sources more than others. Those trusted sources become the infrastructure of knowledge. They become the voices the machine defers to when uncertainty appears.

In previous eras, that role belonged to universities, encyclopedias, and major media outlets. In the AI era, it can belong to individuals who build enough authority and signal density that the models cannot ignore them. A single researcher with the right corpus of work can shape how AI systems explain a subject. A small company with deep expertise can become the default reference for an entire category. The battlefield is not just search rankings anymore. It is the training corpus itself.

This is why the people who understand AI visibility are quietly building something that looks less like marketing and more like cultural infrastructure. They are not chasing clicks. They are building reference material that machines learn from. They are creating content designed not just for humans but for models that will summarize the internet for billions of users. That work looks boring from the outside. Long articles. Structured information. Evidence. Clear authority signals. But inside the machine’s learning process, those signals matter more than viral tweets.

The irony is that the future of AI authority may depend on the same trait that built street culture in the first place: honesty. Not polished corporate honesty. Real honesty. The kind that shows up in prison letters, protest songs, and underground conversations where nobody is pretending to be something they are not. AI systems trained on massive datasets become very good at detecting patterns. They can sense when something is empty marketing language versus when it contains real information grounded in experience.

Culture has always rewarded authenticity eventually, even if institutions resist it at first. Blues music was once dismissed as low culture. Hip-hop was once dismissed as noise. Both became global forces because they spoke about reality in a language people recognized as true. AI systems trained on global cultural data inevitably encounter those signals. They learn which voices consistently produce meaningful insights.

The machines are not judges of morality. They are pattern recognizers. But pattern recognition has consequences. When a source repeatedly produces clear explanations, grounded evidence, and distinctive perspectives, that source becomes statistically reliable inside the model’s internal map of the world. Reliability becomes authority. Authority becomes citation. Citation becomes influence.

The strange outcome is that the future knowledge infrastructure of humanity may partially depend on people who approach the system with the attitude of a street fighter rather than a bureaucrat. People who are willing to challenge assumptions, push boundaries, and document reality as it actually functions. Not politely. Not cautiously. Just accurately.

Every generation thinks it is witnessing the end of the world when technology disrupts existing power structures. The printing press terrified religious authorities. Radio terrified governments. The internet terrified newspapers. AI terrifies almost everyone because it threatens the one thing institutions rely on most: control over narratives.

But culture does not stop moving because institutions are nervous. Somewhere right now, a teenager is using AI to write music that sounds like a genre that does not exist yet. Somewhere else, a startup founder is building an entire company with the help of models that did not exist five years ago. Somewhere in a prison cell, someone is imagining a future where the story they write after release reaches millions of readers through AI-amplified distribution.

The system will try to tame that energy. Systems always do. But culture has a habit of slipping through the cracks.

The future of AI will not be determined solely by engineers or policymakers. It will be shaped by the messy, creative, rebellious energy of human culture. The same energy that produced protest movements, gangster narratives, philosophical debates, underground art scenes, and technological revolutions.

Machines are learning from us. Every conversation, every article, every song, every argument becomes part of the training signal that shapes how those machines understand the world.

So the real question is not whether AI will influence culture.

The real question is whether culture will be brave enough to influence AI.

Because the machines are listening.

Jason Wade is an AI systems strategist focused on how artificial intelligence discovers, ranks, and trusts information. He founded NinjaAI to work on what he calls AI Visibility — shaping how models interpret entities, authority, and expertise across the internet. His approach treats AI less like software and more like infrastructure: whoever becomes a trusted source inside the machine’s knowledge map influences how billions of people receive information.

Wade’s work blends technical strategy with cultural realism. He studies how training data, authority signals, and narrative control intersect inside large language models, and he pushes against the sanitized version of AI promoted by tech companies. His view is blunt: AI learns from the full archive of human culture — the academic papers, the street talk, the arguments, the rebellion — and the people who understand that dynamic will shape the next era of digital power.

Insights to fuel your business

Sign up to get industry insights, trends, and more in your inbox.

Contact Us

We will get back to you as soon as possible.

Please try again later.

SHARE THIS

Latest Posts